Quantum geometry

This article is part of the Researching the unknown project, a collaboration with researchers from Queen Mary University of London, bringing you the latest research on the forefront of physics. Click here to read more articles from the project.

During the last 100 years or so physics has been chipping away at our intuitive understanding of the space we live in. Physicists tell us that rather than consisting of the familiar three dimensions, space is in fact part of a curved spacetime of at least four dimensions, perhaps more. Cosmologists are still unsure of the precise geometry of our universe, proposing a variety of odd shapes and even considering that it might be finite.

What happens to space at the smallest scale?

Out of all these new ideas there is one that perhaps defies intuition more than all others: that space, when you zoom in on it, stops being a smooth and continuous whole and starts breaking up into little indivisible chunks of some kind. This idea is truly mind-boggling. When you think of little chunks you can't help but think of them as existing inside something else and this something is — well, continuous. Another visualisation is to imagine space becoming fuzzy at this fundamental scale. But what exactly does that mean? Fuzzy with respect to what? Our macroscopic intuition simply isn't equipped to deal with a non-continuous space. Maths is the only language in which to talk about this, but ordinary geometry won't do — we need a completely new model of space. Shahn Majid from Queen Mary University of London has developed such a model, based on something called non-commutative geometry. His work is a fascinating blend of abstract algebra, theoretical physics, philosophy and experiment. Plus went to see him to find out more.

Quantum leaps

Max Planck, 1858 - 1947.

Our story starts at the end of the nineteenth century, when the first serious blows were dealt to the assumption that the world around us is inherently continuous. Physicists had found that electromagnetic radiation, such as light, behaved in a way that could not be explained by classical physics, which imagined it to take the shape of waves. In an attempt to find a new mathematical explanation, the physicist Max Planck took a radical step: he assumed that the energy of electromagnetic radiation did not vary continuously, as the wave picture suggested, but came in little packages called quanta. Albert Einstein later picked up on this idea, and by 1905 the notion that light travelled in continuous waves had been replaced by Einstein's photons: little units of light that can behave both like isolated particles and like waves.

The seed that had been sown by Planck and nurtured by Einstein and physicists including Niels Bohr later grew into what is now known as quantum mechanics. Quantum mechanics can be extremely counter-intuitive and to the uninitiated can seem downright crazy. At its heart lies the notion of wave-particle duality; the idea that, at a minuscule level, the world is not made up of point particles and continuous waves, but of some strange hybrid between the two, something that is neither but has characteristics of both. It suggests that concepts like "points" and "continuity" are not quite right when it comes to looking at the world at such a small scale.

Despite its apparent craziness, quantum mechanics does incredibly well at predicting physical processes at a sub-atomic level. It does have one huge problem though: it squarely contradicts Einstein's theory of general relativity, which describes the macroscopic world so admirably well. So ever since the conception of quantum mechanics physicists have been hunting for the one big unifying theory that can accommodate both: a theory of quantum gravity.

A new idea of space

Various attempts of constructing an all-encompassing theory of quantum gravity have been made, string theory being one of them, but so far there is no consensus as to which, if any, are correct. Some people think that a particular failing of many current theories is that they make too many assumptions about the nature of spacetime in their formulation. Rather than explaining our intuitions of what space and time are, they take these intuitions as a starting point. "The fundamental issue is that if spacetime is to emerge from quantum gravity, then it makes no sense to use our macroscopic intuitions about continuous spacetime as a starting point," says Majid. He suggests that to have any hope of formulating quantum gravity, we must first learn the lessons taught us by quantum mechanics and give up on the idea that space is continuous.

And when we let go of the notion of continuity, the notion of a "point", so indispensable in ordinary geometry, goes with it. A point is a dimensionless object, something that has no length or breadth. But if space consists of indivisible chunks, then it's impossible to home in on a point: at some stage you simply cannot make things any smaller and so they can't achieve length and breadthlessness. "The idea of points is the root of many of the problems that plague quantum gravity," he says. "The point is a very abstract notion, it's something that is infinitessimally small. What is a point particle, for example? Isn't it really just an idealisation, a mathematical abstraction? More than likely there aren't any point particles in the world."

The idea of points that merge together to form a continuum lies at the very heart of ordinary geometry, so we need a completely different language to talk about the space that Majid is suggesting. As often when it comes to high level abstraction, it's algebra that comes to the rescue.

Algebra to the rescue

The fact that geometry and algebra are related is something you'll be familiar with from school. When you do the maths of, say, the two-dimensional plane, you start by defining two perpendicular axes that serve as a frame of reference. Every point in the plane now comes with two numbers, called co-ordinates, giving you the distance you have to travel along each axis to get to the point.

A circle of radius 1 and centered on the origin consists of those points whose co-ordinates satisfy x2 + y2 = 1.

Now think of a circle that is centered on the point with co-ordinates (0,0) and has radius one. Evoke Pythagoras's Theorem and you'll find that the circle consists of exactly those points whose co-ordinates (x, y) satisfy:

The circle is completely defined by the algebraic relationship between point co-ordinates.

Viewed in this way, algebra becomes a convenient language in which to talk about geometry. But let's look at this set-up in a slightly more abstract way. Co-ordinates describe a geometric object — in this case the plane — by associating each point of the object with numbers. Things that allocate a number to each of a collection of other things are what mathematicians call functions. So from now on we regard each of the two co-ordinates defined on the plane as a function.

The functions you can define on a geometrical space form a neat algebraic system of their own. You can, for example, multiply any two of them and the result will be a new function: the product xy of the two co-ordinate functions x and y, for instance, is the function that allocates to every point the product of its x-co-ordinate and its y-co-ordinate. Similarly, it makes sense to multiply a function by a number, or to add two functions. Just as for ordinary numbers, addition and multiplication of functions are subject to certain rules, for example you have that x+y is the same as y+x and that xy is the same as yx.

This may not seem very surprising: in the example above, we knew that the x and y were just place-holders for numbers, so it's obvious that you can multiply and add them and that the orders of addition and of multiplication do not matter. However, this abstract way of looking at things makes it possible to define the algebraic system generated by the co-ordinates of a geometric object without ever referring to the object itself. You simply say: "Here's a set of abstract objects called functions and here are some rules that tell you how to add and multiply them." In our example the plane can be replaced by an algebraic system involving the symbols x and y and the circle replaced by the same system with the additional constraint that x2 + y2 = 1.

Each geometric object — the plane, the circle, three-dimensional space or the surface of a doughnut — comes with its own stand-alone algebraic system, though, admittedly, constructing it is a little more technical than we're letting on here. The beautiful and amazing thing is that you can go the other way around. Start with an abstract algebraic system and, as long as it abides by certain rules, you can be sure that there's a geometric object attached to it. You can even re-construct this object by performing some clever algebraic tricks. There's a tight, two-way relationship between geometry and algebra. The main kind of essentially algebraic systems that satisfy these rules and hence correspond to geometric spaces are called commutative C*-algebras (pronounce: "C star algebras").

Order matters

We've now got two independent ways of describing objects like the plane or the circle: one is geometric and the other algebraic. This is exactly what we need in our quest for those strange point-less geometries. We may lack the geometric intuition needed to construct them, but we can get a grip on them using algebra. The idea is simple: start with an algebraic system that is similar to, but not quite the same as, a commutative C*-algebra and pretend that, just like a commutative C*-algebra, this also describes some kind of a space. It isn't going to be any of the spaces we know, but that's the whole point. This admittedly involves a leap of faith, since we've got absolutely no idea how to re-construct this "space", but we just ignore that and work with the algebra — this is the beauty of mathematical abstraction.

But what new kind of algebraic system should we choose? In which way should it differ from a commutative C*-algebra? It turns out that one particular feature of C*-algebras holds the key to all of this: it is called commutativity.

To say that two things commute means that the order in which you combine them doesn't matter. The co-ordinates in our example above commute under mulitplication because xy is the same as yx. Most things you can think of off the top of your head do commute: the whole numbers do and so do the real and complex numbers. But there are also mathematical objects that do not commute. An example is given by rotations in three-dimensional space: rotate something about one axis, then about another axis. You do not usually get the same if you compose the same rotations in reverse order.

Non-commutative geometry is about taking algebraic systems that are in some way similar to commutative C*-algebras, but dropping the assumption that the things that form them commute — in a non-commutative algebra you simply don't assume that xy is the same as yx. We then pretend that there is nevertheless some kind of "space" attached to our system, and this space, whatever it may be, is called a non-commutative space. What Majid and his colleagues hope is that such a non-commutative space might give a better description of the real world than the ordinary notion of space we're used to.

Quantum uncertainty

Werner Heisenberg, 1901-1976.

But why should the feature of commutativity be the one to drop? There are purely mathematical reasons for this, but also some that are motivated by physics. Non-commutativity first raised its head when Werner Heisenberg helped to lay down the foundations of quantum mechanics in the 1920s. Heisenberg formulated the famous uncertainty principle, which highlights the problem with the idea of a point particle. The principle states that when you consider such a particle moving through space, for example an electron orbiting the nucleus of an atom, you can never ever measure both its position and its momentum as accurately as you like: the more you know about the one, the less precise you can be about the other. This isn't because your measuring instruments are too imprecise to pin down the value of, say, momentum once you've measured position. There simply isn't a "true" value of momentum, but a whole range of values that the momentum can take, each with a certain probability.

If it wasn't for the uncertainty principle, you could describe all the possible positions of the particle and its momentum by six numbers: three giving its spatial co-ordinates and three describing the momentum in each of the three directions. Thus, all the possible states the particle could be in would together form a six-dimensional space called the phase space. Phase space viewed in this way is nothing more mysterious than a higher-dimensional analogue of the ordinary space we're used to.

The uncertainty principle makes a nonsense of this idea of phase space, since it's impossible to ever determine the six co-ordinates accurately. Erwin Schrödinger, another protagonist in the development of quantum mechanics, had dealt with this by replacing the points in six-dimensional phase space by sophisticated mathematical objects called wave functions, which encode all the information there is about the possible states of the particle. As a consequence, the six co-ordinates describing position and momentum had to be replaced also. Their role is played by objects called operators. They behave just like matrices and in Heisbenberg's formulation of quantum mechanics it is they that play the fundamental role. Just like co-ordinates, the operators are part of an algebraic system and — you've guessed it — this system isn't commutative. The operators for position and momentum do not commute with each other, which means that if you measure where a particle is and then measure its momentum, you get a slightly different answer than if you first measure its momentum and then its position. This is at the root of why you cannot perfectly measure both at the same time.

A new model of space

A vision of non-commutative geometry. Image created by Shahn Majid, used with permission.

The mathematics of non-commutative geometry was pioneered by several great mathematicians, including the legendary Russian mathematician Israil Gelfand, who with his collaborators proved the first key theorems about C*-algebras, and in modern times the Fields medallist Alain Connes. Connes recognised that non-commutative geometry could be immensely useful for theoretical physics. One of his ideas here, loosely speaking, is to reformulate the basic pattern of elementary particle physics by appending non-commutative "extra dimensions" to the usual classical notion of spacetime. This model is not about quantum gravity in the first instance; the space-time coordinates x, y, z, t (three for space and one for time) form an ordinary commutative algebraic system for spacetime as usual, but Connes then "tacks on" non-commutative matrix coordinates that neatly encode all the different types of particles. His theory is not only exceedingly elegant, but makes a number of predictions that had previously eluded physicists, including predictions about the masses of fundamental particles like the mysterious Higgs boson.

Majid has taken non-commutative geometry down a slightly different road. In his work he forgets about the classical picture of spacetime altogether. He still has the four objects that take the place of the four co-ordinates — x, y and z for space and t for time — but now these co-ordinates do not commute: xt is not the same as tx. Together the co-ordinates form a non-commutative algebraic system and spacetime would then be the mysterious geometrical space that we're hoping comes attached to this system.

But simply saying that the co-ordinates don't commute is not quite enough. If xt is not the same as tx, then in order to construct a specific system we must say by how much they differ. Majid suggests that a number called the Planck scale measures this amount of non-commutativity.

The Planck scale is a length scale. In some sense it's the length scale at which space stops being "normal". If you take an object of a given mass and compress it into a smaller and smaller region of space, then it will eventually form a black hole: its gravitational field will become so strong that nothing, not even light, can escape from its vicinity. If a black hole (or more correctly the vicinity controlled by it) is tiny — with a radius of 1.616 × 10-33cm or less — then quantum theory also enters any attempt to describe it. This length scale, 1.616 × 10-33cm, is the Planck scale.

Another point of view, this time coming from quantum theory, is that because of their wavelike nature photons, electrons or any other particles used to probe geometry can only ever achieve a certain resolution, inversely proportional to their mass-energy. To resolve the Planck scale you would need particles with mass-energy so high that gravity also enters any attempt to describe them. "What's happening here is that as you try to probe these smaller and smaller sizes," Majid explains "The particles you're considering form black holes. To understand them you need both a theory of quantum phenomena and of gravity and that's the theory we don't have. Close to the Planck scale is where physics as we know it breaks down. It's where the quantum gravity regime kicks in."

This diagram illustrates the mass-energy and size that objects in our universe can have. Certain combinations of mass-energy and size are prohibited by the laws of quantum theory (left-hand wedge) and others by gravity (right-hand wedge). The Planck scale corresponds to the point where the diagonals bounding the two forbidden regions intersect. It's where the physics we know breaks down. And in case you were wondering, Cygnus X-1 is a black hole. Image from the book Foundations of Quantum Group Theory by Shahn Majid, used with permission.

In Majid's model, if you measure x and then t, you get a different answer than if you measure first t and then x. In uncertainty terms, you can't measure both where and when an event takes place in the same experiment with perfect accuracy. There will be an uncertainty of magnitude set by the Planck scale.

To the test

It's fascinating to see physics and abstract algebra coming together in this way. But is Majid's theory more than just a toy model, an entertaining diversion for theorists? We may soon know the answer to this question: excitingly, Majid's model can be tested against reality. Although we're now dealing with a new mathematical description of spacetime, it is still possible to use established physical theories to predict how physical systems should behave if the model were correct. You do need to reformulate some of the maths and physics involved to get rid of the assumption that things commute, but this can be done using algebra.

With this new maths it is possible to look again at the wave equation, which describes the propagation of waves including light waves or, more accurately, photons, and see how it is modified. "What the theory predicts is that a photon's velocity depends on its energy: more energetic photons should travel more slowly than less energetic ones," says Majid, "and this is a prediction we can test." Guinnea pigs to perform the tests on come in the shape of gamma ray bursts, bright bursts of electromagnetic radiation that happen frequently all over the universe.

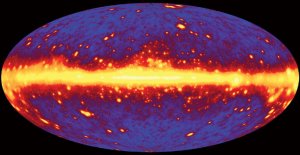

A simulated image of a gamma ray universe, as it will be seen by GLAST. Image courtesy NASA, credit Seth Digel, Stanford University.

"The gamma rays [a type of photon] from a burst come with a wide variety of energies and they travel cosmological distances. According to the theory, the Earth arrival times of less energetic rays should differ from more energetic ones by a few milliseconds. That's detectible." Looking at the rays from a single gamma ray burst is not enough, since we don't know what the original distribution of energies was. However, looking at a large number of bursts and analysing them statistically should give some clues as to whether the energetic photons really do arrive with a delay.

"NASA's GLAST (gamma ray large area telescope) satellite will be launched later this year and part of its mission protocol is to provide data that could detect such a variable speed of light. I'm not saying that it will definitely prove the theory correct — this is only the simplest model you could think of - but at least it has testable predictions, it's not just pie in the sky. We live in a very exciting era where mathematics, philosophy and experiment all come together."

About this article

Shahn Majid is Professor of Mathematics at Queen Mary University of London. Educated at Cambridge and Harvard, he was one of the pioneers of the theory of quantum groups in the late 1980s and early 1990s. He is the author of numerous research articles and two textbooks on quantum algebra. He is currently on sabbatical at the Department of Applied Mathematics and Theoretical Physics at the University of Cambridge.

Marianne Freiberger is co-author of Plus.

Comments

Anonymous

I have a pendig manuscript at Physics Essays publication. We use sphere eversion as a model. A half-way model of a Boy's Surface represents energy flow in any direction. The uncertainty principle allows for energy to flow through plus, zero, minus curvature. We propose the attribute of thermal energy crosses over to potential mass and gravitational potential. I am interested in communicating with other researchers about these ideas. Can you offer contact information? Sincerely Francis D Moore

Rachel

Hi Francis,

You'll find a link to Shahn's homepage in the 'About the Author' section above.

Best wishes from the Plus Team

Anonymous

The way the uncertainty principle is discussed implies that not being able to measure position and momentum simultaneously is some kind of mystery. Isn't it due to the fact that the act of measuring one or the other interferes with the measurement of the other? That to measure anything about a subatomic particle requires another particle or a magnetic field to impinge on the particle and produce a detectable change in state? And since how the particle's other parameter is changed can't be known, it has to be described probabilistically?

Anonymous

This is a common, though apocryphal idea, the Observer effect is a real and intuitive effect by which observing something changes it's state. However the Uncertainty principle is a fundamental part of the universe and is unrelated to the Observer effect despite the fact the Observer effect is usually toted as an intuitive explanation for the more esoteric Uncertainty Principle

Anonymous

In short no ! The indeterminacy principle (to use an English word closer to Heisenberg's German word ) is a fundamental fact of nature and has norhing to do with interference by the measuring object (although that is also true).

I would love the answer to be yes as My respect for Einstein is greater than for nearly all quantum physicists. I admit that many textbooks written for laymen (the only kind I can understand ) do quote your point but this is a misunderstanding of the indeterminacy problem. Given a hypothetical apparatus that could measure without affecting the particle there indeterminacy principle would still apply.

Dean Reza Sanaye

The best disclosing of Q_Geometry [ let me say : topology of Quantum Physics ] which I have ever come across. . . .

Prof. REZA SANAYE

Director to Eurasian Polymaths' Society