The future of proof

Are mathematicians ever going to be replaced by computers? If maths was all about routine calculations, then the answer would most definitely be yes. But if you've ever tried to come up with a mathematical proof, or even played with a logic puzzle, you know this involves intuition and leaps of imagination you'd think are beyond any computer. Even just deciding which kind of questions are mathematically interesting, and which are boring or beyond reach, seems to be something that needs human input.

The panel debating the future of proof at the B(A)MC. From left to right: David Tranah, June Barrow-Green, Tim Gowers, Andrew Pitts and Ursula Martin.

Yet, humanity's role in the future of mathematical proof was being discussed at the British (Applied) Mathematics Colloquium last week, by a panel of people who know the territory very well: the mathematician (and Fields medallist) Tim Gowers, the historian of mathematics June Barrow-Green, computer scientists Andrew Pitts and Ursula Martin, and David Tranah representing Cambridge University Press, an important publisher of mathematics research.

Computer-assisted...

Mathematical proofs that rely on the help of a computer are nothing new. The first example was the 1976 proof of the four-colour theorem, which says that any map drawn on a flat piece of paper can be coloured with just four colours, without any two adjacent regions having the same colour. To verify the result, a computer checked through a large number of particular maps, and made sure that none of them amounted to a counter example of the supposed theorem. In 1998 the famous Kepler conjecture, which concerns the question of how to best stack spheres (such as oranges) so as to optimise the use of space, leaned even more on computer help: its "proof" contained over 3 gigabytes of computer code and data. (See Welcome to the maths lab for more details about these results.)

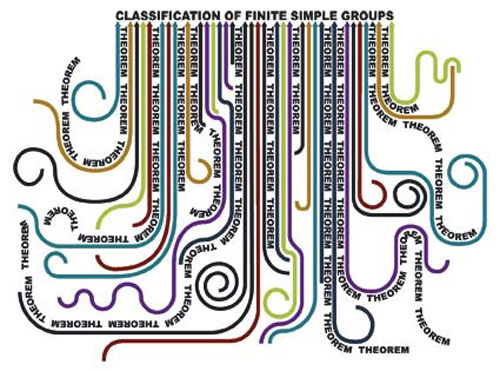

The problem with these kind of proofs is that no single person is able to check that the computer hasn't made a mistake. Some would argue that the results therefore can't be considered 100% proven, but others are happy to accept them. And as Barrow-Green pointed out, proofs that can't possibly be contained in a single person's head don't necessarily involve computers: an example is the collaborative effort by over one hundred mathematicians to complete the classification of finite simple groups. Neither is the use of computers a new thing. Martin quoted the example of Hardy and Ramanujan, who in the early twentieth century used tables calculated by a human computer, Major MacMahon, in their work on the partition function.

... or computer-generated

Computers have also entered mathematics in a more dramatic way, as more than mere calculators. Automated theorem provers (ATPs) are computer programs that can use the rules of logic to prove mathematical results: they can show that a result (phrased in a suitable language of logic) can be derived logically from a set of basic assumptions, or axioms.

ATPs have had successes in mathematics, but, interestingly, have also found applications in areas that impact on all our lives. Computer programs are used in a huge range of areas, from controlling passenger planes or nuclear reactors to heart pacemakers. In order to be safe (and/or save lots of money), you need to know that such systems work correctly. One way of ensuring this is to test a system many times in many situations and make sure it always does the right thing – but that can be time consuming and, crucially, if you can't test all possible scenarios, it might still miss errors. ATPs can be used instead, to mathematically verify that a specific hardware system or the code that runs on it is correct and will always perform as it should.

A (slightly unrealistic) map coloured using four colours.

ATPs still need a lot of human input to work, but Pitts believes that, in computer science at least, a sea change is happening. Young researchers are no longer bothered about the nature of proof. Instead they use automated proving systems "like video games": picking up automatic proof tactics – "like blasters" – and pointing them at problems to see if they work.

Many mathematicians would probably find this development slightly sad: as Pitts pointed out, it means that people become less concerned with making a mathematical argument elegant and well-structured. A computer doesn't care if a proof uses a brute-force approach, for example checking through zillions of possibilities – its aim is simply to find a proof. A human mathematician, by contrast, will always search for a higher-level principle that unites all these possibilities in one elegant step. And indeed, the proofs used for verification processes in computer science, so Pitts, are usually "large and ugly".

No room for humans

But perhaps these developments needn't concern mathematicians too much; after all, they are only really applications of maths in a different field. But Gowers believes that even within mathematics itself grand things are still to come. What differentiates human mathematicians from computers (or perhaps even computer scientists) is not only that they like their proofs elegant and beautiful. They would also like them to provide some insight into why a result is true. And they discover proofs in what seems to be a uniquely human way, for example through high-level associations with other areas of maths or science that a computer in its mechanical myopia (apparently) has no chance of making.

But Gowers argues that even within the human way of doing maths there is something objective going on. Think of a student who is diligent and hard-working, but not too bright. If you're an experienced teacher, you perhaps feel there's a chance of formally capturing the way such a student might discover a proof – and the question of how a computer might discover it is not too dissimilar. If we can gain a formal understanding of what exactly we mean by "proof", how people discover proofs, and create a cleverly classified database of existing maths as background knowledge, then perhaps we can one day enable computers to prove results just like human mathematicians do.

Gowers believes there's a good chance that human mathematicians will indeed have maneuvered themselves out of the game by the end of this century. And once computers are good enough to prove results, they will also become good at deciding which results to prove, thus entirely ridding themselves of the need for human guidance.

If you're of a philosophical bent, then one objection immediately springs to mind. Computers have no choice but to use an axiomatic approach to maths. Their logical deductions need to be based on a set of axioms and rules, and you could argue about what these should be. What is more, Kurt Gödel's work from the 1930s (see here) shows that within any decent axiomatic system there will always be statements that you cannot prove or disprove.

This might seem to put a limit to what computer mathematicians can do, but if you think about it, not more than it limits human mathematicians. We still do maths in the face of these problems and most mathematicians only worry about such foundational issues on their days off, if at all. And if automated mathematicians become as good as Gowers suggests, then perhaps they will one day be able to reason about those issues themselves.

Crowd maths

The classification of finite simple groups involved over 100 mathematicians pulling together the many strands of the theorem.

Apart from potentially providing non-human mathematicians, there is also another way that technology impacts on the way we do maths. As Barrow-Green pointed out, maths has never been a single-person process. Our standards of proof have changed over the millennia, so people have re-visited results again and again, coming up with new proofs and new ways of looking at them. Today technology enables people to collectively work on a result, not just over time, but simultaneously. One example of these collaborative proofs is the classification of finite simple groups, mentioned above, which was completed in 2004 and involved over 100 mathematicians spread across the world – something that surely would not have been possible in the days of snail mail. Another example is the Polymath project set up by Gowers, which allows mathematicians to collaborate by posting their thoughts about a problem online or commenting on the thoughts of others.

This development, argues Tranah, should change the nature of how mathematics is vetted and published. At the moment mathematicians send their papers to academic journals, which subject them to review by other experts in the field, and publish them if those experts deem the results correct and interesting. The journals act as record keepers of the maths that's being done, and have traditionally been the places you go to when looking up results.

Today, so Tranah argues, these journals are no more than "junk mail", really only benefitting the authors of papers and their institutions, as a track-record of their work. When mathematicians look for new results, they don't wait for papers to appear after a lengthy review process (which can take years in some mathematical fields). Instead, they turn to pre-print servers where papers are posted before they have been officially published, and use a mathematician's reputation and their own expertise to assess a paper's merits. So perhaps, the traditional peer review process could be replaced by a crowd review process. Interesting papers will attract the online attention of many mathematicians, who over time will add corrections and comments to a paper. This process, so Tranah, will separate the good papers from the bad and ensure they are correct. Publishers would no longer need to bother with peer reviews and thus save time and money. They could even charge the authors of papers for publication, if they truly are the main beneficiaries of the publishing process.

Explanation versus truth

Understanding how proofs are developed is of vital interest to everyone on the panel. Computer scientists need this information so that they can create more powerful automated systems. Historians and philosophers are looking for insights into the culture of mathematics and what is considered valid in the mathematical community. And mathematicians want to learn how to do more mathematics. "This [understanding how ideas develop] is what we do when we're having coffee with friends," says Gowers. And this, he says, is far more powerful than the final proofs that appear in the academic literature which give little clue to how they were discovered.

At the heart of this debate is the question: what are proofs for? Are they merely certificates of truth, or should they reveal why something is true? For many mathematicians, proofs that explain why something is true hold the greatest value. Could such proofs ever be achieved by a computer? And what would that be worth anyway, if no human could ever understand that explanation?

Further reading

Find out more about proofs in the following articles:

- Why we want proof

- Welcome to the maths lab

- The origins of proof

- The origins of proof II: Kepler's proofs

- The origins of proof III: Proof and puzzles through the ages

- The origins of proof IV: The philosophy of proof

You can see all Plus articles to do with proofs here.

About the authors

Marianne Freiberger and Rachel Thomas are Editors of Plus.