Good-looking gibberish

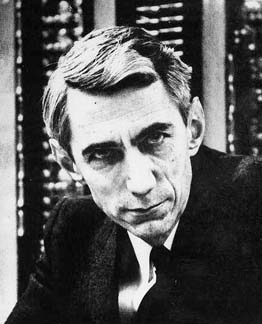

How would you approximate the English language? In the 1940s the mathematician Claude Shannon asked himself just this question. Given a machine that can produce strings of letters, how would you set it up so that the strings it produces resemble a real English sentence as closely as possible?

Claude Shannon.

A naive approach is to imagine a keyboard that contains all possible letters and punctuation marks, as well as a space bar, and get the machine to stab at the keyboard at random, with each key having the same probability of being hit. Shannon produced a message using essentially this method and it reads as follows (it appears Shannon only allowed capitals):

XFOML RXKHRJFFJUJ ZLPWCFWKCYJ FFJEYVKCQSGHYD QPAAMKBZAACIBZLHJQD.

That's not a very good attempt at a meaningful sentence.

The next-best approximation would be to choose the letters, and other symbols, with the frequency they actually have in the English language. For example, according to Shannon, the letter E appears with the frequency 0.12 (12% of letters in a text will be an E) and the letter W with frequency 0.02. So you could set your machine up to hit the E key with probability 0.12, the W key with probability 0.02, and so on. A message generated in this way already looks a tiny bit like a real language, though not exactly English:

OCRO HLI RGWR NMIELWIS EU LL NBNESEBYA TH EEI ALHENHTTPA OOBTTVA NAH BRL.

To do even better, you could make the probability of a symbol appearing dependent on the previous letter. This reflects the fact that certain two-letter combinations — such as TH — are much more common than others — such as BD. Using this approach Shannon generated the sequence

ON IE ANTSOUTINYS ARE T INCTORE ST BE S DEAMY ACHIN D ILONASIVE TUCOOWE AT TEASONARE FUSO TIZIN ANDY TOBE SEACE CTISBE.

Similarly, you could make the probabilities of hitting the various symbols depend, not just on the previous letter, but on the two previous letters:

IN NO IST LAT WHEY CRATICT FROURE BIRS GROCID PONDENOME OF DEMONSTURES OF THE REPTAGIN IS REGOACTIONA OF CRE.

This does look like real English at first sight, there are even real words in there, but it still makes no sense whatsoever. At this point you might decide to get your machine to pick whole words from a dictionary, rather than individual letters from a keyboard. As before, you could get it to pick words independently of each other, but with probabilities that reflect their frequencies in the English language:

REPRESENTING AND SPEEDILY IS AN GOOD APT OR COME CAN DIFFERENT NATURAL HERE HE THE A IN CAME THE TOOF TO EXPERT GRAY COME TO FURNISHES THE LINE MESSAGE HAD BE THESE.

Or you could make the probability of picking a word dependent on the previous word in a way that reflects real English:

THE HEAD AND IN FRONTAL ATTACK ON AN ENGLISH WRITER THAT THE CHARACTER OF THIS POINT IS THEREFORE ANOTHER METHOD FOR THE LETTERS THAT THE TIME OF WHO EVER TOLD THE PROBLEM FOR AN UNEXPECTED.

That's not bad! Shannon seems to have stopped there, but imagine continuing in this vein, making a word's probability dependent on the previous two, three, four, etc words. At some point you'd probably produce something that reads quite well.

Shannon published these examples in his 1948 landmark paper A mathematical theory of communication. His aim was to make communication via media such as telegraphy, TV and radio more efficient. The examples here might seem a bit silly, but the overall theory he developed became so influential that Shannon has become known as the father of information theory.

Another concept, which is related but different, involves a monkey randomly typing on a type writer with each key having the same probability of being hit — that's the situation we had in the first example above. However, rather than producing one short string, you give the monkey an infinite amount of time to type away. The idea is that eventually the monkey will produce something meaningful, such as the complete works of Shakespeare. To find out just how long you would have to wait for that, see Infinite monkey business.

Comments

Anonymous

I saw some software that worked like this several years ago. While it generated output that was essentially meaningless, it was easily recognizable which author was used for input if the author had a distinct writing style.

Anonymous

Great article. Reminds me of the SCIgen "utility". Though, by now, I wonder how sophisticated these programs have become.

Link: http://www.nature.com/news/publishers-withdraw-more-than-120-gibberish-…