Article

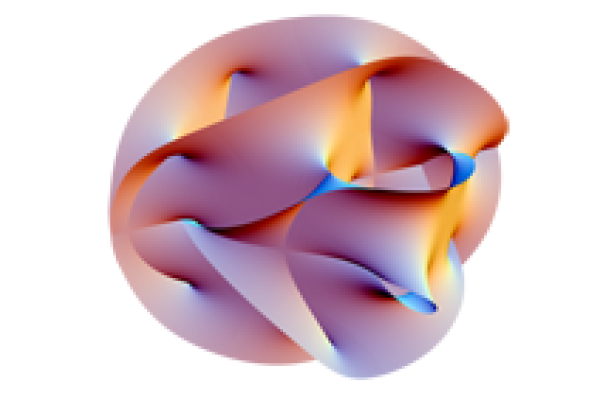

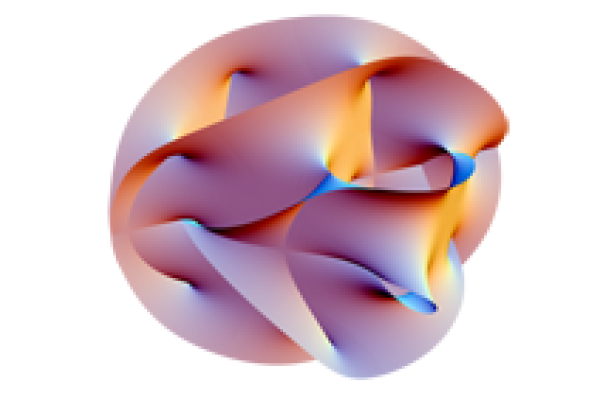

String theory: Convincing mathematics

Find out how a theory from physics has provided tools for solving long-standing problems in number theory. And in turn how number theory helps solve the mystery of black holes.