Learning the unseen

Brief summary

This article explores how deep learning can be combined with traditional techniques to improve medical images such as those produced by CT, MRI, and PET scans.

Artificial intelligence, especially the generative variety, continues to grab the headlines. Its results are astonishing, scary for some, but not infrequently they are also nonsense.

What isn't so well-known is that there are other forms of AI, designed for more specific tasks, whose applications are less frightening and genuinely useful. An example comes from imaging, particularly in the medical realm.

On the scanning table

If you've ever had a CT, MRI or PET scan you'll know that it isn't a comfortable experience. You have to lie perfectly still for what feels like an eternity. There are risks associated with the radiation you're exposed to. And if you were injured in an accident, there'd be no way the scanner could come to you to provide a diagnosis there and then. Portable devices don't yet exist.

Quicker and smaller scanners would be a huge improvement, not only for your personal safety and scanning experience, but also for cutting NHS waiting lists. This is where artificial intelligence offers new possibilities. Riccardo Barbano, Alexander Denker, and Hok Shing Wong, from the Mathematics for Deep Learning (maths4DL) research programme, are three researchers who are exploring ways of deploying it in medical imaging. The aim is to come up with safe and reliable methods that improve the diagnostic value of imaging techniques while hopefully reducing the amount of radiation, and discomfort, that patients are exposed to.

Inverse problems

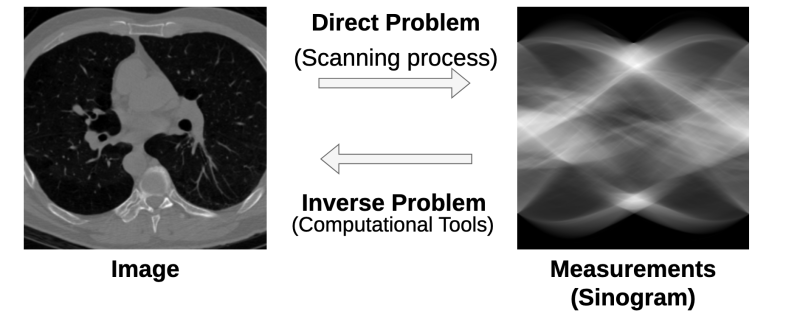

The reason why the scanning techniques mentioned above aren't quicker is that they don't produce images directly as a camera does. Instead, they provide data (for example the intensity of X-rays after they have passed through the body) and from this data the image has to then be reconstructed.

It's an example of an inverse problem: you need to infer, from your observations, the properties of the object that caused the observations. You'll have come across an inverse problem if you've ever tried to guess the shape of an object from its shadow. And if you've ever done that you'll know that you need mathematics, in this case geometry, to solve the problem.

Inverse problems are notoriously hard to solve. One difficulty is that there can be more than one answer. Different shapes can cast an identical shadow. Similarly, different configurations of organs, bones and tissue could give rise to the similar data sets when scanned. "The problem is what we call ill-posed," says Denker. "There might be two images that lead to the same measurements."

The difficulty is compounded by the fact that the measurements a scanner produces will never be 100% exact, in other words, the data will be noisy. The noise can come from the patient moving (they do need to breathe and swallow and they might even twitch), from the fact that no measuring instrument is 100% precise, and from all sorts of other environmental factors. "Whenever you acquire data, there will be some form of noise," says Barbano.

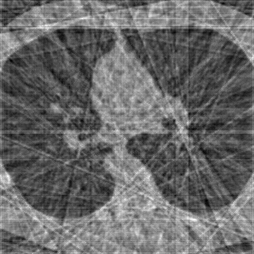

To mitigate these problems you need to collect as much data as you can get away with. That's why scans take as long as they do and require motionless subjects and huge machines. The image below illustrates what can go wrong if you reduce the amount of data collected. In a CT scan the patient is placed in a ring and X-rayed from many angles around the ring. If you reduce the number of angles, the reconstructed image shows up lines that seriously impact its quality.

Working backwards

The aim of Denker, Barbano and Wong is to improve existing methods in CT, MRI and PET scans and to perhaps also enable scanning approaches, such as electrical impedance tomography, that just won't work properly with traditional maths. To do this they employ deep learning, the mathematical technique that drives the type of artificial intelligence that has seen so much success in recent years. Put simply, deep learning learns statistical patterns within data by looking at lots of examples (find out more in this article). It can then use the knowledge of these patterns to produce desired outputs.

As far as medical imaging is concerned, you could train a deep learning model on lots of data sets that have been acquired by scanners (i.e. lots of sinograms) and the corresponding correctly reconstructed images. By spotting patterns that link inputs to ideal outputs, the model would then learn how to reconstruct an image all by itself from a new set of measurements (i.e. a new sinogram).

This approach is a purely statistical endeavour, making use solely of patterns within data while ignoring our physical understanding of what's going on. Denker and Barbano are working on an alternative approach that does bring in existing human knowledge. "We combine techniques from the [mathematical field that looks at inverse problems] with the deep learning field," says Denker.

The traditional mathematical approach to solving the inverse problem posed by scanners uses our understanding of how the scanner's measurements are produced in the first place to then work backwards and reconstruct the image. When you try and guess the shape of an object from its shadow you do something similar. You use the knowledge that shadows arise when light rays are blocked to infer what kind of shape might have blocked them. This involves the geometry of straight lines.

When it comes to medical imaging the mathematics is more sophisticated, using mathematical objects such as Radon transforms and Fourier transforms. But the general idea is the same. "Classical techniques use the so-called forward operator to try and go backwards to recover the original image," says Denker. Often this is done using an iterative process (such as a lovely technique known as gradient descent). An initial stab at the solution to the difficult inverse problem is improved step-by-step by repeating the same type of calculation many times. You can find out more about traditional techniques in this article and about gradient descent in this article.

Weaving AI through traditional maths

One way of bringing deep learning into this process is to train a deep learning model to recognise what a good scan image should look like. For example, having been shown many examples of good CT images, a model might learn that such images don't contain many hard edges, they look quite smooth. Given an image produced by traditional methods, the deep learning model can then improve it, smoothing out hard edges in an appropriate way.

The idea of improving an image isn't new. "Prior to deep learning it was also possible to enforce a [characteristic] such a smoothness in an image using mathematics," says Barbano. "You could say, I don't want the [pixel colours] to change too rapidly and enforce this through a mathematical object." The limitation of this is that you, as the human, need to know in advance what characteristics the image should have. A deep learning model, by contrast, might identify desirable characteristics (in the shape of statistical patterns in the images it's been trained on) that we as humans can't even see. "Deep learning can extract how things should look like from actual data and it can represent [the desired characteristics] through data," says Barbano.

Such a deep learning contribution can be woven into the classical iterative process. "For example you might do one step of gradient descent, then you do one step [using deep learning] to improve the image, then you do one step of gradient descent, and so on," says Denker.

It's an interesting approach to integrating deep learning with classical techniques which capture our understanding of the physics involved. But maths4DL researchers are exploring other avenues too. Gradient descent is an example of an optimisation algorithm which gradually improves potential solutions to a mathematical problem. Wong is investigating how optimisation could be done using deep learning. Rather than designing optimisation methods "by hand" using complex mathematics, the idea here is to train deep learning models to learn how to optimise directly from data.

Building foundations

The maths4DL research programme aims to put deep learning onto a sound mathematical foundation. Rather than seeing a particular deep learning method through to implementation in a real scanner, the job of Barbano, Denker and Wong is to address fundamental problems for which there still isn't a lot of theory.

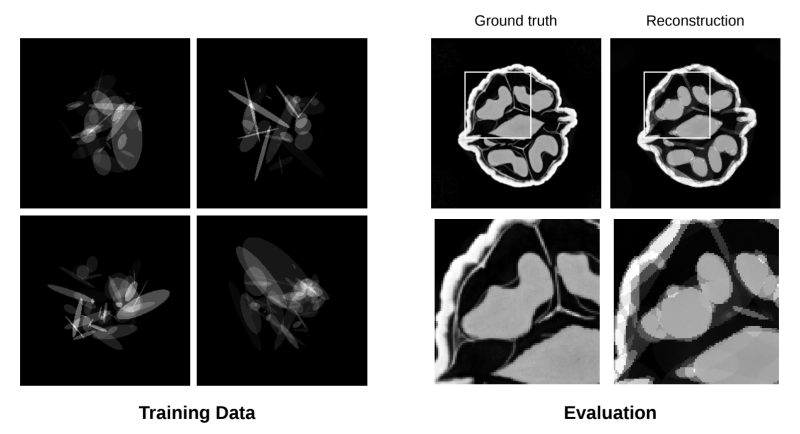

An example is the problem of generalisation, which plagues the field of deep learning as a whole: when you have trained a deep learning model on one data set, will it give correct answers for data it has never seen before? In medical imaging this problem is particularly acute. No two scanners are alike, even if they're of the same model, and a patient group from one hospital can be very different from the patients of another hospital. A human clinician can quite easily transfer their expertise from one scenario to another. But how do you make (mathematically) sure a deep learning model is able to do the same?

The image below illustrates some of Barbano and Denker's work. To test their ideas, they trained a model on an artificial data set involving images made up of ellipses (training data, left). When given a new set of measurements from a scan the model then reconstructs it using ellipses (Testing data, right), because that's what it has learnt. The question is, how accurate can such a reconstruction be and can we give precise bounds on this accuracy?

Answers to such fundamental questions, often provided by academic researchers, power industrial developments. Indeed, a handful of companies are now offering CT and MRI scanners that use deep learning in the reconstruction of images. A more common use of AI in medical imaging is post-processing, which employs deep learning to improve images after they have been produced by traditional scanners. But while this helps clinicians with diagnoses, it doesn't speed up the scanning process or reduce the radiation patients are subjected to. It will probably some time yet until scanners with in-built AI features are routinely used.

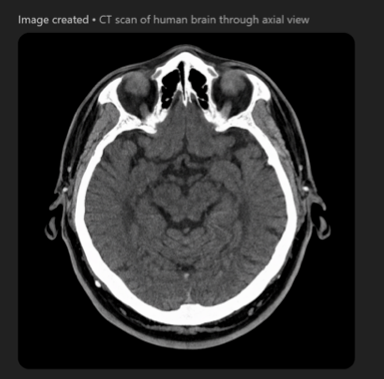

Coming back to the generative AI we mentioned at the start of the article, during our interview Denker and Barbano decided to conduct an experiment. They asked ChatGPT to produce a CT image. The result, shown below, claims to depict a human brain. "This is definitely not human, but maybe it's from an alien," said Barbano. ChatGPT is definitely not the way to go.

About this article

Riccardo Barbano and Alexander Denker are Post Doctoral Research Associates at University College London.

Hok Shing Wong is a Post Doctoral Research Associate at the University of Bath.

All three are members of the Mathematics for Deep Learning (Maths4DL) research programme.

Marianne Freiberger is Editor of Plus. She interviewed Barbano, Denker and Wong in March 2026.

This content is part of our collaboration with the Mathematics for Deep Learning (Maths4DL) research programme, which brings together researchers from the universities of Bath and Cambridge, and University College London. Maths4DL aims to combine theory, modelling, data and computation to unlock the next generation of deep learning. You can see more content produced with Maths4DL here.