What makes a modelling paper useful for policy?

Mathematical modelling is a key tool used throughout government to support policy and decision making. The COVID-19 pandemic provided a striking example: models were used to shape policies around school re-opening, the introduction of support bubbles, university re-opening, and many other policy decisions. But there is also a wide range of other policy areas that benefit from modelling, such as climate change, transport, and energy.

A mathematical model is a description of a process in the world around us in terms of mathematical expressions. You can use the model to get an idea of how the process may play out, or how this might change if circumstances change.

You can find out more, including how models are used, here.

Despite the usefulness of their work, modellers face a range of challenges when wanting to communicate their results to policy and decision makers. The complex ideas and technical languages involved in modelling are difficult to communicate and prone to misunderstanding for people who aren't modellers. Mathematical models also tend to elicit extreme responses from these audiences, as Chris Whitty explained during a Modelling in Policy workshop in July 2023: some people see models as crystal balls while others ignore them completely.

The Modelling in Policy workshop was organised by teams at the University of Bristol and the Cabinet Office to improve how modellers and policymakers work together, identifying and sharing good practice. During the workshop experts who have been involved in the policy making process in the UK, including those from policy, civil service and the modelling community, reported on their experiences and the key lessons they have learned, particularly during the COVID-19 pandemic.

The following quotes from participants from the workshop capture the principles we identified that modellers could apply to make their work more useable for policy-making:

- "Influence the influencers"

- "Work within the window of influence"

- "State the blooming obvious"

- "Allow results to be tested and explored"

- "Find the killer graph" but "make graphics professional and consistent"

- "Don't frighten people off with limitations"

- "Policy impact is not academic impact"

"Influence the influencers"

Modellers may be tempted to think that you need to be in a room with a minister in order to communicate your work to government. However ministers are often time poor and may not have the background to understand the technicalities of the modelling results.

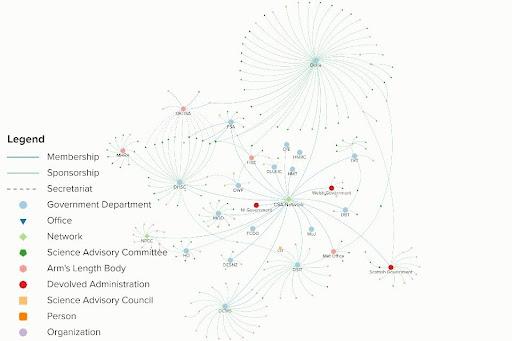

The most useful avenue to informing decision making is by influencing the influencers: those who will prepare advice for ministers (such as the secretariats and members of advisory committees) and those who are able to communicate this information directly to ministers (such as Cabinet Office staff). There are increasing numbers of scientists working in the civil service who have the background to understand models – you can use their skills in communicating complex results. The Chief Scientific Adviser (CSA) network is embedded in government departments. You can explore how the CSA network and other science advisory bodies fit within the government in an interactive map provided by the Government Office for Science.

Policymakers rely on people they have worked with before or are part of their trusted networks to produce models that are needed for policy. These trusted collaborators can then be brought into the policy making process when timescales are short.

A good example is the Joint Committee on Vaccination and Immunisation (JCVI) who routinely rely on unpublished research and work closely with modellers to identify scenarios that are of interest. The committee and subcommittee structures of the JCVI establish a trusted network of expertise within the scientific community who are in a good position to be aware of currently unpublished research that may be useful in decision making.

Getting to know policy makers in the relevant parts of government, and the people who influence them, will help you understand how your research can support informed decision making. Times of emergency, such as in the early days of a pandemic, are not the ideal time to build a relationship from scratch.

"Work within the window of influence"

Policy making operates under very different timelines to academia. In academia the priority is quality, and academics often have the luxury of time to consider their results. But in government time is non-negotiable. Longer timescales may sometimes be available to gradually scope out an area, but important decisions are often taken extremely rapidly. To quote Chris Whitty, Chief Medical Officer for England, again: "an 80% right paper before a policy decision is made is worth ten 95% right papers afterwards."

As an academic you need to adjust to tight timescales if your work is going to be impactful. For example, peer review might not be possible. Instead shorter reports can be shared as pre-prints to accumulate feedback from the modelling community. To manage within tight timelines policy makers work with their trusted networks and established relationships mentioned above.

"State the blooming obvious"

When presenting your work be aware that the final decision makers, such as ministers, are likely to have less than 15 minutes of time and attention. Although you might not be the person briefing them, think through what needs to be communicated to them. Distil the information down to the key messages and provide further evidence, clearly written, should they want to know what backs up those messages.

Remember that what may be obvious to you may be unfamiliar to non-scientists and try to pre-empt their questions. As well as avoiding or explaining technical jargon, you should also state any apparently self-evident assumptions – such as the horizontal axis in a graph representing time. Encourage your audience of policy makers to ask questions to help them understand the results and build their own intuition. Provide non-technical summaries conveying the key insights, alongside an academic account of any modelling results. Storytelling and analogies can be a way to quickly convey these main messages and help non-scientists intuitively understand how these results. An excellent example of this is Jonathan Van-Tan's use of football analogies during the COVID-19 pandemic (when he was Deputy Chief Medical Officer) to support public health messaging.

"Allow results to be tested and explored"

The goal of modelling is to understand relationships between factors that are important in the process that is being modelled.

A key misconception is that models are designed to produce accurate and specific predictions of the future. This misconception feeds mistrust: if your audience does not agree with an assumption, or with the modelling approach, then they may discard outputs as inaccurate. Another factor that feeds into mistrust is the complexity of many models, which turns them into black boxes for all but experts in the field.

One way to address these issues is to allow others to interact with your work, enabling them to retrace, check, test, and experiment, to form their own intellectual picture of the process in question and the factors at play, and to differentiate between predictions and qualitative understanding.

For example, presenting results in an interactive format allows people to interact with your work, for example interactive charts which allow users to change parameter values or input their own data and observe the change in output. Examples of this include simple interactive graphs built using standard software (such as the example in the article What's the price for relaxing the rules?), or interactive charts such as the census maps produced by the ONS. Providing policymakers with access to your data and results in this way enables them to find relevant information and pose their own questions in their own time, allowing them to form their own world view.

A final point to consider when it comes to enabling people to explore your results is whether these results might inform a "decision tree" for policy, indicating turning points or red lines which, when reached, should trigger a change in policy. A policy example was the roadmap out of lockdown during the COVID-19 pandemic informed by modelling by members of the University of Warwick and JUNIPER modelling consortium. The approach, referred to as "data not dates", set out the conditions that needed to be met at particular points in time for restrictions to be lifted further. There is a strong case for doing more of this type of modelling that contributes to such tramlines for decision making, including helping policy makers to understand what future scenarios to consider.

"Find the killer graph" but "make graphics professional and consistent"

There are strong opinions about whether graphs, or narrative, is the best way to communicate to non-scientists. What was agreed on was that graphs should be used sparingly and consistently, so that decision-makers do not have to repeatedly learn to interpret new types of formats.

When using visuals, make sure they are consistent, clearly annotated and that they reflect common-sense assumptions (e.g. that the horizontal axis represents time). Complex graphs in particular may be overlooked if they are not easy to interpret, and advice from scientific advisors was to focus on conveying one clear message per chart. Think carefully about how to represent uncertainty.

Be aware that not everyone prefers a visual format. To aid interpretation, include explanatory text and annotate graphs to highlight important features. Present your graphics professionally – rough output might be acceptable for early work but can affect credibility and uptake later. Also make your visuals reproducible by others, for example by allowing access to the underlying data.

"Don't frighten people off with limitations"

Many models used for policy decisions are complex, with multiple assumptions and limitations – achieving the right balance between certainty and limitations can be challenging. The aim is for an equilibrium that allows informed decision-making without being overcautious or unduly confident in model results.

As with timeframes, there is a difference between cultures. As a modeller you are trained to emphasise the limitations of your models, but in the policy arena emphasis of uncertainty can be interpreted as a lack of reliability and lead to inaction. Don't be intimidated by this. Be clear that understanding limitations is an integral part of modelling and that limitations themselves provide important information — they help us understand what we can be certain of. Don't be afraid to share your views on how your model can usefully impact policy decisions.

At the same time, recognise the boundaries of your work and don't overstate its capabilities. Acknowledge the wider expertise, beyond modelling, that contributes to policy making and recognise that different evidence can bring competing pressures to decision making.

One approach that has proved valuable in building trust in modelling results involves presenting a consensus view that brings together different approaches to modelling. Such a consensus approach was used by SPI-M-O during the COVID-19 pandemic. If you can present multiple models with different approaches that show similar results, this will give policy makers more confidence in these results.

Generally, approach the communication of limitations with tact to avoid discouraging your audience, and acknowledge the collective wisdom inherent in consensus views. Ultimately, fostering an environment that embraces both certainty and limitations facilitates a more robust and nuanced understanding of scientific phenomena, guiding responsible decision-making in the face of complexity.

"Policy impact is not academic impact"

Another barrier between modellers and policymakers is the tension between the goalposts of academic success (including new results, academic rigour, publishing through the peer review process) and the practicalities of policy making. As a result, academic papers are often not useful for policy. Conversely, work that might not count towards academic achievement can still have significant policy impact.

Interaction between academics and policy makers is, however, increasingly recognised as beneficial and distinct from academic impact. Research does not have to be novel to influence policy, instead it should draw on a range of appropriate techniques and approaches. Extensive knowledge of a field, including others’ work, is highly valued in policy contexts.

As academics we are trained to be experts. We focus on a particular area and only publish new results in which we have a high degree of confidence. We're averse to speculation. Especially those from a mathematical background will typically work towards something resembling a proof before sharing their work with the wider academic community.

There are situations when a particular area becomes relevant to policy and long-standing experts are called on for advice, for example in the work of the JCVI. However, more often than not, the scientists who become involved with policy are those who are able to quickly adapt their expertise to current questions. The COVID-19 pandemic again provides a useful example: few of the modellers who worked on the government response were experts on coronaviruses before the pandemic. Instead, many adapted their knowledge — from seasonal influenza, veterinary diseases, and other pathogens — to deal with the new threat.

If you would like to contribute to policy, don't wait for your expertise to be called upon. Scan the horizon, for example by looking at the National Risk Register, for areas you may be able to contribute to. Don't underestimate your wider scientific training and knowledge — what is considered trivial in your area of research may well be useful news outside of it, or outside of science.

Don't be afraid to jump in, even with results that in an academic context would be considered incomplete. The hesitancy that is standard etiquette in academia can lead to complete inaction in the policy landscape when specialised expertise in a particular area simply doesn't exist.

Be trustworthy

A final and very important point to note – trustworthiness underpins all impactful communication. The philosopher Onora O'Neill has provided key insights into trustworthiness, including intelligent transparency, characterised by the four features below (outlined in the Royal Society's Science as an open enterprise report). The principles for impactful modelling described above give modellers practical ways to embed these features of trustworthiness into their interactions with policy makers.

- Accessible

Policy makers should be able to find your work when they need it. By building relationships with them, being flexible to their needs and working within the window of influence your research will be accessible to those who can use it to inform their policy making when they need it.

- Intelligible

Knowing the people who will be using your work allows you to communicate your work in the language they use. And having established two-way communications encourages policymakers to ask questions and allows you to check they understand your work.

- Useable

Understanding the environments and contexts in which policy is developed enables you to produce modelling that answers policy makers needs, when they need it.

Providing certainty of why your work is useful, while being clear about the limitations of the modelling, allows policy makers to understand the strengths and limitations when developing policy, and enables them to convey this in a compelling narrative to decision makers and the public.

- Assessable

Allowing your results to be tested and explored provides a bridge to overcome one of the key challenges for modelling: non experts tend to either be true believers or absolute sceptics. Enabling them to explore your work and answer their questions in their own time builds a deeper understanding and builds trust in your work.

We hope that our principles are useful for modellers who would like to engage directly with policymaking. These ideas might complement those set out in Harnessing the power of mathematical models for better policy decisions, a briefing published by the Academy for Mathematical Sciences aimed primarily at policymakers.

Ultimately, the more modellers communicate their work to people outside the academic community, the more embedded the central ideas of modelling will become in the minds of these audiences, and the easier the communication will become. This will increase the impact that mathematical modelling can have, both in day-to-day Government decision making and in national emergencies.

About this article

Ellen Brooks Pollock is Professor of Infectious Disease Modelling, Ewan Colman is a Senior Research Associate in Mathematical Modelling of Infectious Diseases, Leon Danon is Professor of Infectious Disease Modelling and Data Analytics at the University of Bristol.

Rachel Thomas and Marianne Freiberger are the Editors of Plus.

This article is part of our collaboration with JUNIPER, the Joint UNIversities Pandemic and Epidemiological Research network. JUNIPER is a collaborative network of researchers from across the UK who work at the interface between mathematical modelling, infectious disease control and public health policy. You can see more content produced with JUNIPER here.